Diverging Frameworks Across the US, UK, EU, Singapore and China

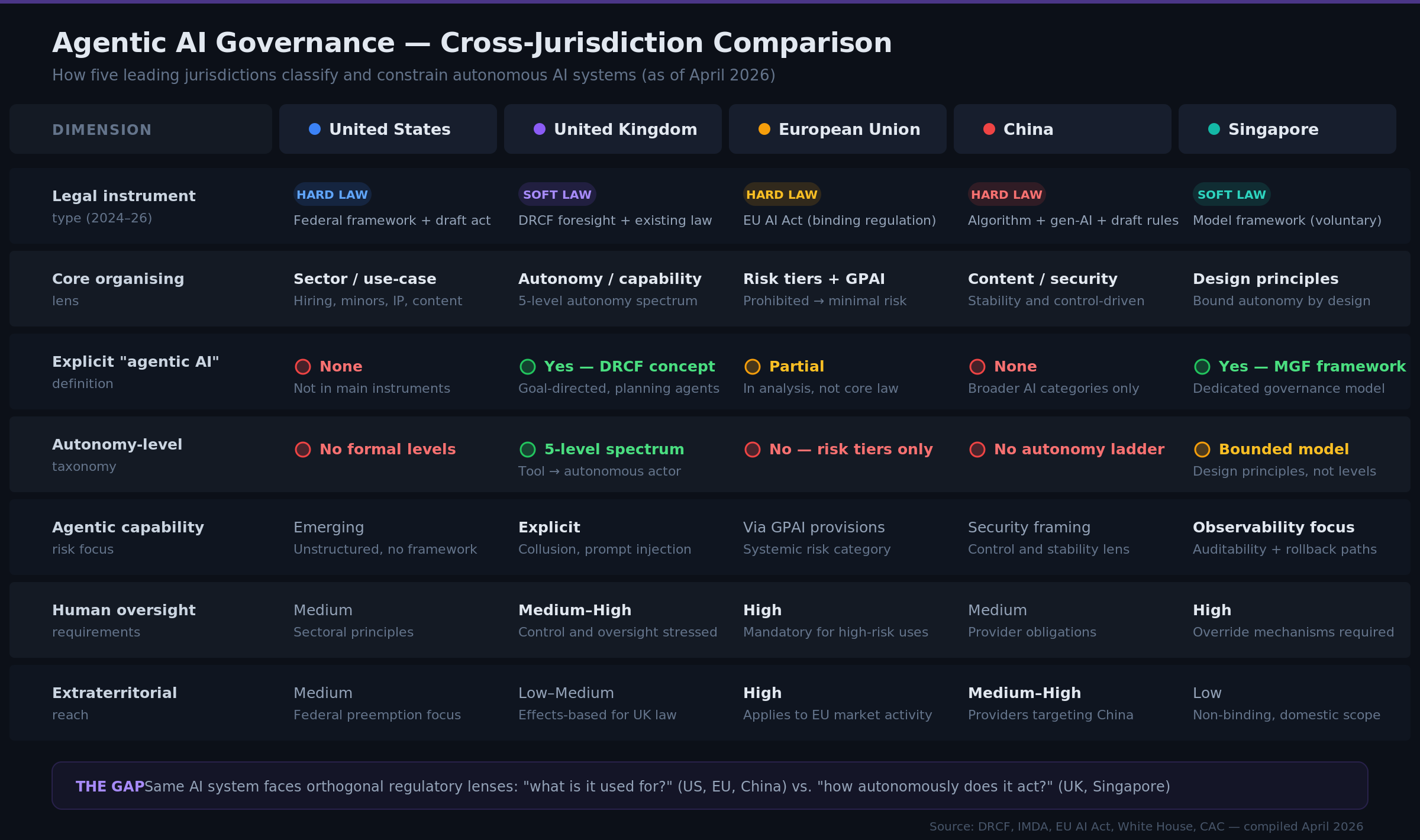

Five jurisdictions are now actively shaping how agentic AI will be governed — and they are each framing the problem through a different lens. If your organisation deploys AI systems that plan, decide, and act autonomously across borders, you are already operating inside a governance gap that no single compliance framework currently addresses.

This piece maps the divergence, explains why it creates real architectural exposure, and outlines what technology and risk leaders should be tracking.

What is happening

UK: classifying AI by how autonomous it is

In late March 2026, the UK's Digital Regulation Cooperation Forum (DRCF — a joint body of the CMA, FCA, ICO, and Ofcom) published "The Future of Agentic AI," a foresight paper that introduces a five-level autonomy spectrum for AI systems.

The paper defines agentic AI as systems that pursue goals, plan action sequences, and act on a user's behalf. It organises the landscape from simple tools at one end through to highly autonomous actors with broad action spaces at the other. Most currently deployed systems, the DRCF notes, sit in the middle — "enhanced assistants" or "bounded agents" — which is also where commercial adoption is moving fastest.

The DRCF explicitly names near-term risks that other jurisdictions have not yet formalised: algorithmic collusion (agents coordinating to distort markets), harmful action bundling, and prompt injection (external content hijacking an agent's behaviour). It also stresses that existing UK law already applies — no new legislation is needed to regulate what is already being deployed.

US: regulating AI by what it is used for

The US takes a fundamentally different approach. The March 2026 National Policy Framework for Artificial Intelligence and the emerging federal AI legislation organise obligations around sectors, protected populations, and use cases: hiring and employment, children's safety, content and speech, intellectual property, worker protections, competition, and national security.

There is no "agentic AI" definition in these instruments. No autonomy spectrum. No numbered levels. A system is classified by the domain it operates in and the population it affects, not by how independently it acts.

Singapore: bounding autonomy by design

Singapore launched the world's first governance framework specifically for agentic AI in January 2026 — the Model AI Governance Framework for Agentic AI. It is soft law (non-binding), but it provides detailed guidance on the design questions that matter in practice: how to assess and dynamically bound autonomy, how to constrain tool access and data permissions, how to maintain meaningful human override, and how to ensure observability, auditability, and rollback for agent behaviour.

Where the UK provides an autonomy ladder, Singapore provides an engineering checklist for keeping agents within defined boundaries.

EU: risk tiers, not autonomy levels

The EU AI Act is the most developed hard law on AI to date, now moving into implementation. It classifies systems by risk tier — prohibited, high-risk, limited-risk, minimal-risk — with additional obligations for general-purpose AI models that present systemic risk. But it does not introduce "agentic AI" as a legal category or create anything resembling an autonomy-level taxonomy. AI agents are governed through existing use-case and GPAI provisions, not through a separate autonomy lens.

China: content control first

China has built a layered regulatory apparatus for algorithmic recommendation, deep synthesis, and generative AI, and in March 2026 issued draft rules for "interactive AI services." The focus is on content control, security assessments, and social stability. As with the EU, there is no explicit agentic AI definition or autonomy taxonomy — agentic systems are absorbed into broader categories of generative and interactive AI, with obligations anchored to what a system outputs and whether it threatens public order, not how many autonomous decisions it chains together.

Where we stand

Each jurisdiction is asking a different question about the same technology:

UK asks: How autonomously does this system act? It provides a five-level spectrum and names capability-specific risks like collusion and prompt injection.

US asks: What is this system used for and who does it affect? Obligations track sectors and protected groups, with no autonomy framework.

Singapore asks: Can you prove humans remain in meaningful control? It provides design principles for bounding, overriding, and auditing agent autonomy.

EU asks: What risk tier does this system fall into? The answer depends on use case and model category, not on how independently the system operates.

China asks: Does this system's output comply with content and security requirements? Autonomy is not the regulatory variable — what the system says and does in Chinese digital space is.

Why this matters for your organisation

The same system, different classifications

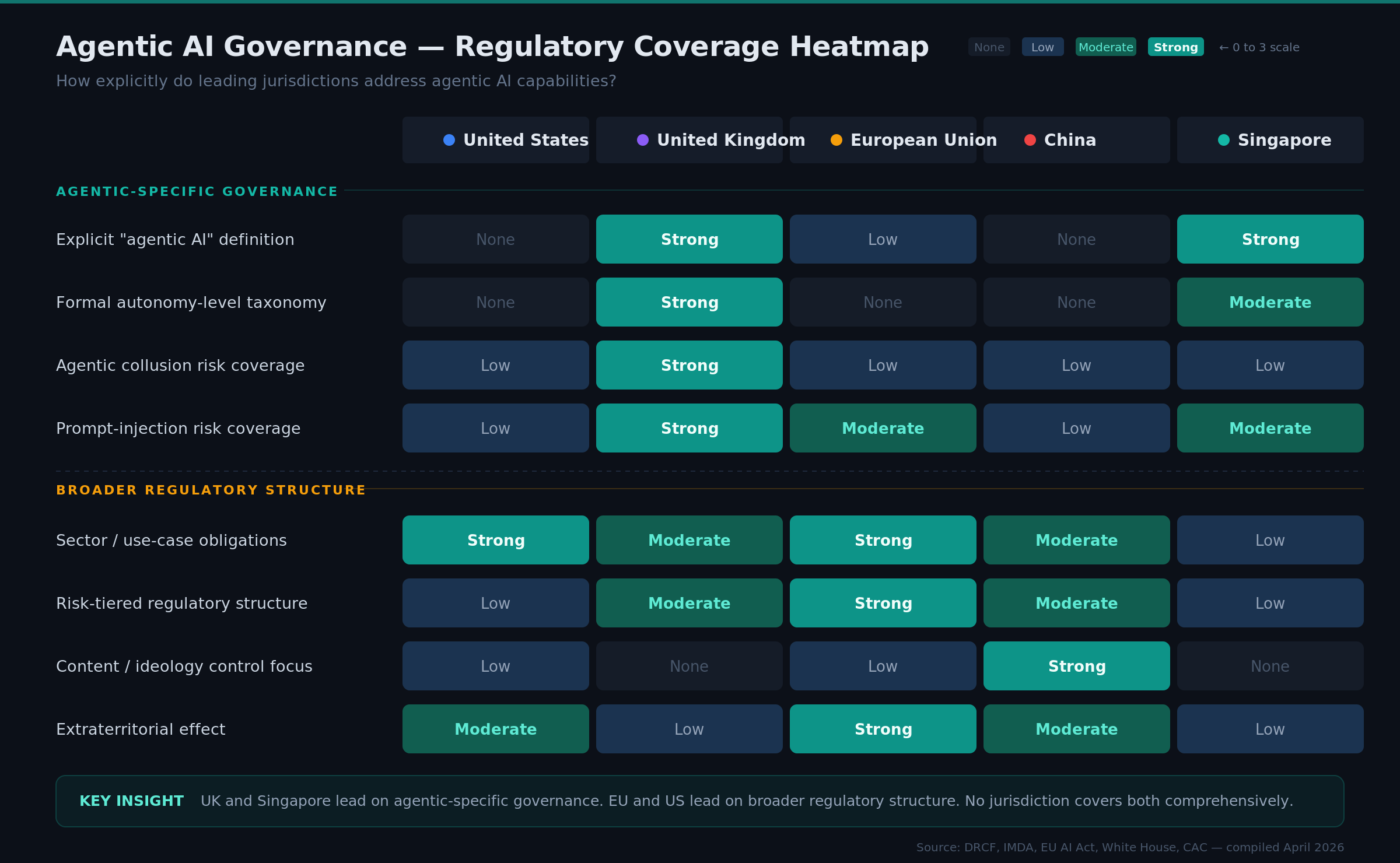

The UK framework is the first major cross-regulator document that classifies AI primarily by degree of autonomy and agentic capability, rather than by application domain. This matters because the fastest-growing enterprise deployments — orchestration agents, autonomous code generation, planning systems that chain tools — sit squarely in this agentic category.

Consider a concrete example. An orchestration agent that plans multi-step actions and executes them with human sign-off would likely be classified in the UK as a mid-level autonomous agent on the DRCF spectrum — goal-directed, multi-step, operating under oversight. In the US, that same system might be classified as a "productivity tool for employees" or an "infrastructure component," with obligations flowing from the use case and sector rather than its autonomy characteristics.

The UK foregrounds capability-based risks (collusion, prompt injection, unbounded action) while the US foregrounds domain-specific harms (bias in hiring, minors' safety, IP). These lenses are orthogonal — a system that is fully compliant under US use-case rules could still raise concerns under UK expectations for autonomy-level behaviour, and vice versa.

Add the remaining jurisdictions and the picture compounds. Singapore asks whether your design allows autonomy to be dynamically bounded and audited. The EU asks whether your system falls into a prohibited or high-risk use case. China asks whether your outputs comply with content and stability rules. Satisfying one framework does not meaningfully advance compliance with another.

Parallel compliance architectures

For multinational deployments, this divergence means organisations will increasingly need parallel compliance frameworks: one addressing "what is it used for and where is it deployed?" (US, EU, China), and another addressing "how autonomously does it act and how is that bounded?" (UK, Singapore).

This is an architecture problem, not a documentation problem. Agentic systems embed assumptions about where humans must be in the loop, how far agents can act without approval, and how agents interact with each other and external tools. Those assumptions get coded into orchestration layers, security models, and product UX — they are difficult and expensive to change after the fact.

Early design choices are creating lock-in

If you are building agentic AI systems today, you are making design decisions about human-in-the-loop gates, observability of multi-step plans, tool access boundaries, and rollback mechanisms that will define your compliance surface for years. Organisations that build only for one regulatory lens — say, US sector rules — may face significant retrofit costs if UK guidance, Singapore's framework, or future EU updates crystallise into enforceable requirements on agent autonomy.

Chatham House has described this divergence as a growing "deadlock" in AI governance, where agreement on high-level principles masks deep differences in how agents and autonomy are actually classified and constrained.

Practical implications by role

CIOs and CTOs should audit agentic deployments against both the use-case lens (EU/US model) and the autonomy-level lens (UK/Singapore model). If your orchestration layer does not have observability into multi-step agent plans, that is a gap regardless of jurisdiction.

CISOs should note that prompt injection and agentic collusion are no longer theoretical concerns — the UK has formalised them as regulatory risks. If your security model treats agents as static tools rather than dynamic actors with expanding action spaces, the threat model has a blind spot.

CROs and General Counsel should map exposure by jurisdiction and by capability, not just by use case. Internal taxonomies that can translate between regulatory frameworks will be necessary, because no external standard currently provides that translation layer.

The organisations that will navigate this environment with the least friction are the ones treating regulatory divergence as an input to system architecture — factoring it into design decisions early rather than addressing it as a compliance exercise after deployment.

This analysis is part of the Fault Lines series on TEKHORA Insights, tracking structural divergences in technology governance that create risk and opportunity for multinational organisations.

TEKHORA is a technology geointelligence platform. We map where your digital supply chain meets geopolitical risk — across 50+ jurisdictions and counting. Join the waiting list